Introduction

In November 2024, FactReview (FR) was recognized as a trusted flagger for Greece, in the context of the EU Digital Services Act (DSA).

In December 2024, FR was asked by the Greek Digital Service Coordinator, EETT, to provide available and substantiated information of current national systemic risks in Very Large Online Platforms (VLOPs) or Very Large Online Search Engines (VLOSEs). An updated list of such companies is available here.

Due to the short available time frame, FR has opted to provide a rapid report that summarizes what it considers the most pressing systemic risk: deceptive advertisements and fraud.

Meta’s lack of transparency on its ad assessment systems

Meta’s more than $100B annual revenue, depends almost exclusively on running ads on its platforms Facebook, Instagram, Messenger, and WhatsApp.

Meta has established Advertising Standards to protect users from negative experiences, discriminatory practices, and fraud. However, despite presenting transparency as a policy principle, Meta is not fully forthcoming in establishing that these standards are consistently applied.

In its public policies, Meta states:

Our ad review system relies primarily on automated technology to apply our Advertising Standards to the millions of ads that are run across Meta technologies. However, we do use human reviewers to improve and train our automated systems, and in some cases, to manually review ads.

We reviewed the series of regulatory and transparency reports that Meta has published, mostly in the context of the EU DMA and DSA laws, but important aspects of the review process remain unclear.

In the document “Systemic Risk Assessment and Mitigation Report for Facebook” from August 2024, on page 75, Meta states that it has invested in improving its automated models and claims that its team is working 24/7 to review reported content. However, it does not provide any assessment of how accurate the automated assessment is or what percentage of user-flagged content is actually reviewed by people.

In the document “Digital Services Act Transparency Report for Facebook” of October 2024, on page 14, Meta provides the total numbers for removed ad and commerce content, with 83% being automated removals. However, it still fails to report the number of flagged ads and how many of these underwent human review.

On pages 20-22, Meta provides an overview of how it “maintains high overall accuracy”, but only provides a total “Automation Overturn Rate” of 7.47% across categories. This serves only as an estimate of the “false positive rate”, with the “false negative rate” remaining unknown, i.e. how much infringing content and ads fail to be detected. Industry-standard metrics like precision and recall are not reported. Meta claims that its automated detection systems are audited randomly and improved regularly, but in the absence of detailed metrics, it is not possible to assess these measures.

Another concern is the availability of moderators. Meta does not report the number of moderators working on ads separately, only their total number per language. Reviewing the figures of moderators per language in Table 42.2.a and users per country in Table 42.3 (excluding international languages), we can make several observations:

a) The median is about 157k users per moderator.

b) More prominent countries, like Germany and Italy, report higher moderation coverage, whereas less prominent countries, like Latvia, Estonia, and Hungary, report lower coverage (Germany: 81k users per moderator, Latvia: 367k users per moderator)

c) Greece ranks below average (14th out of 20), with 213k users per moderator (30 moderators for 6.4 million users).

Given the colossal amount of content shared every minute on Meta’s platforms and the massive profit that Meta makes (net income for Sept. 2023-2024 was $56B, an 87% annual increase), we consider the moderator coverage in most languages, including Greek, to be minuscule.

Additionally, on page 9, Meta states that “all Article 16 DSA notices are processed using manual review”, which seems to be the only way for a user flagging potentially illegal content to be certain that it will be reviewed by a person. However, the form for this request appears to be accessible only in an obscure part of Meta’s Help Center, which we would not expect the vast majority of the users to be aware of or to locate readily, in contrast to the Article 16 description of an “easy to access and user-friendly” platform.

Fraudulent ads are rampant on Meta’s platforms

On 30 April 2024, the European Commission (EC) announced that it was opening formal proceedings against Facebook and Instagram under the DSA.

The press release states 4 areas of concern:

a) Visibility of political content

b) Lack of a real-time monitoring tool ahead of EU elections,

c) The impractical mechanism for flagging illegal content

d) Improper handling of disinformation and deceptive advertisements.

In the context of this report, we will focus solely on the latest factor: deceptive ads and related fraud.

The EC press release was welcomed by civil society organizations like EU Disinfolab and CheckFirst, which noted a preceding series of reports that had comprehensively documented the scale of the problem (examples by Reset.tech, AI Forensics, EU DisinfoLab, Rappler, and Qurium). The reports uncovered networks of scammers and propagandists that had managed to bypass Meta’s moderation and were able to mass-run coordinated ads on consumer fraud or political disinformation.

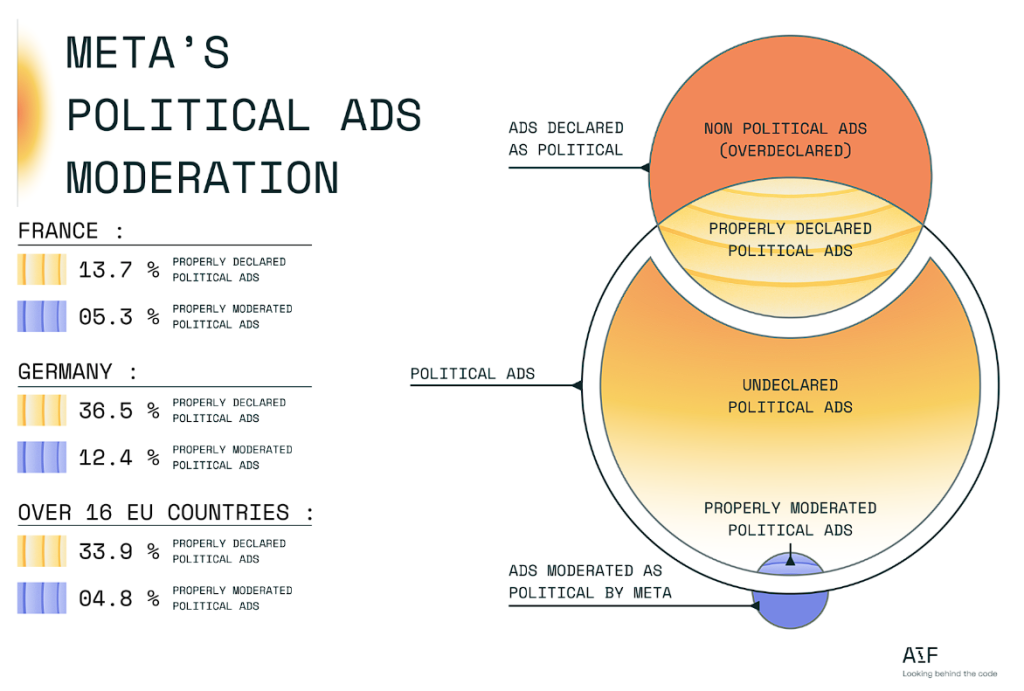

For example, AI Forensics conducted a comprehensive analysis of all ads shown on Meta’s platforms in 16 EU countries for several months. It found that, in most cases, Meta’s systems failed to even detect political ads as political, sparing them from stricter rules and transparency requirements.

Another concerning case is the Doppelganger operation, a disinformation campaign by Russia against Ukraine, first reported in August 2022. Meta still fails to properly block it to this day.

The latest report from 17 January 2025, shows that the Russia-associated entity SDA has managed to get political and time-relevant ads repeatedly approved with fake identifying information, despite SDA being a known sanctioned entity with an established modus operandi. This entity used textual and visual tricks to circumvent Meta’s automated moderation, despite independent researchers being able to automatically detect them with minimal resources compared to Meta. This report also underlines the conflict of interest that Meta faces, as only SDA ads in the EU generated a minimum earning for Meta of $338k.

In November 2024, a survey commissioned by the Bank of Ireland suggested that commercial fraudulent ads are much more widespread than their political counterparts. One-third of Irish adults reported having been targeted by some fraudulent ad, with Facebook and Instagram being the most common social media platforms. Even more people, 47%, reported having seen ads for investments or cryptocurrency featuring well-known personalities, politicians, or musicians – a common technique for scams. This likely suggests that many scams were not identified as such by unsuspecting users.

More recent reports have corroborated the continued scale of the problem, for example by the Bureau of Investigative Journalism and CERT Polska.

While some inadequate action has been taken by Meta when high-profile reports are published about certain scamming networks, when the average user attempts to flag obvious scams, it has been found that flagging is often ineffective, as stated in online forums and professional reporting. This can lead users to consider future flagging attempts meaningless, which also makes related statistics non-comprehensive of the actual user experience.

The extent of the problem in Greece

No comprehensive investigation has taken place specifically for fraudulent ads on Meta’s platforms in Greece. However, a series of reports and observations suggest that the problem is also rampant here.

It should be clarified that Meta’s social media are the most popular in Greece, with total adult usage at 64% for Facebook, 47% for Instagram, and 49% for Messenger, according to the Digital News Report 2024. Therefore, general online fraud statistics largely reflect fraud on Meta’s platforms as well.

Officially, the Hellenic Police recorded more than 5k cases of fraud in 2024, with many promoted through social media though no explicit category for such ads was provided. Cross-referencing the total fraud statistics with internet fraud shows that the large majority of fraud occurs online, and online fraud records are increasing rapidly. Specifically, in 2018, 1,093 cases of online fraud were recorded, whereas the number surged to 5,261 in 2023. Additionally, the director of the Cybercrime Prosecution Sub-Directorate of Northern Greece mentioned recent reports about social media ads impersonating famous people.

A surge in online fraud has also been recorded by SafeLine, the most prominent online abuse helpline in Greece, and a recognized trusted flagger in Greece. SafeLine additionally ranks economic fraud as the top category of abuse being reported.

Regarding specific fraudulent ads in Meta’s platforms, a series of analyses have been published by fact-checking organizations in Greece:

FactReview: Example 1, Example 2, Example 3

Greece Fact-Check: Example 1, Example 2

The articles show that the most common types of ad fraud are celebrity impersonation, and fake gifts or competitions. The primary goals of these frauds are phishing personal and financial details or infecting personal devices with malware.

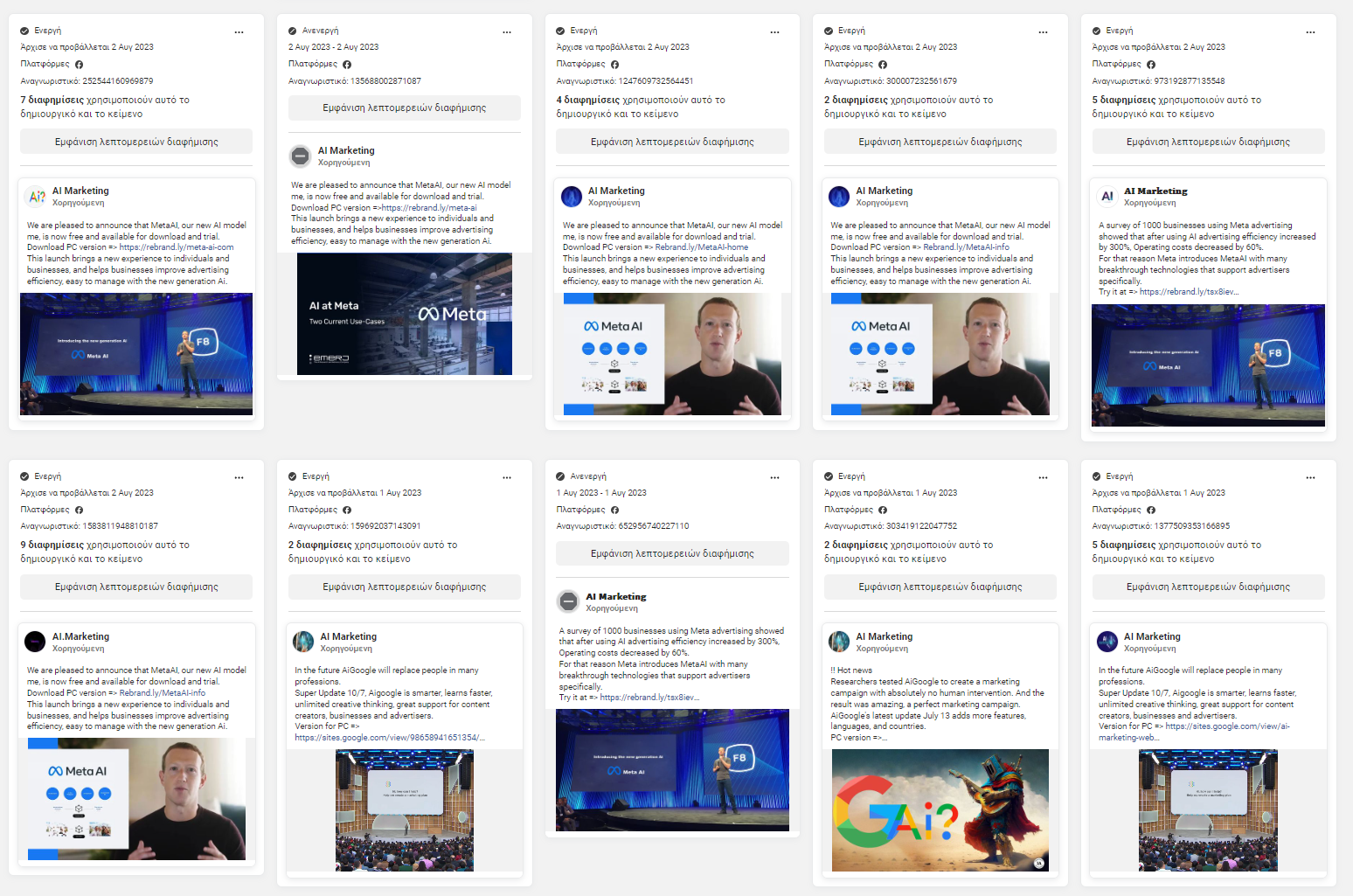

The vast majority of these ads can be easily identified as fraudulent by a moderately experienced online user and very easily by an experienced moderator or fact-checker. Besides consistent themes of fraudulent ads that could be readily identified with modern AI, there are also many technical hallmarks that should allow for automatic takedown or at least flagging for human review, such as the publication of multiple nearly identical ads and links to untrustworthy sites that use a series of redirects.

One striking example of Meta’s inability to implement basic ad moderation was identified in August 2023. Scammers took advantage of the popularity of AI advancements and impersonated Google and Meta, including its CEO Mark Zuckerberg to direct users to install malware. There was no indication that the scammers faced any obstacle in running this easily uncovered campaign in many countries worldwide, including Greece. Similar campaigns were identified running for at least several months.

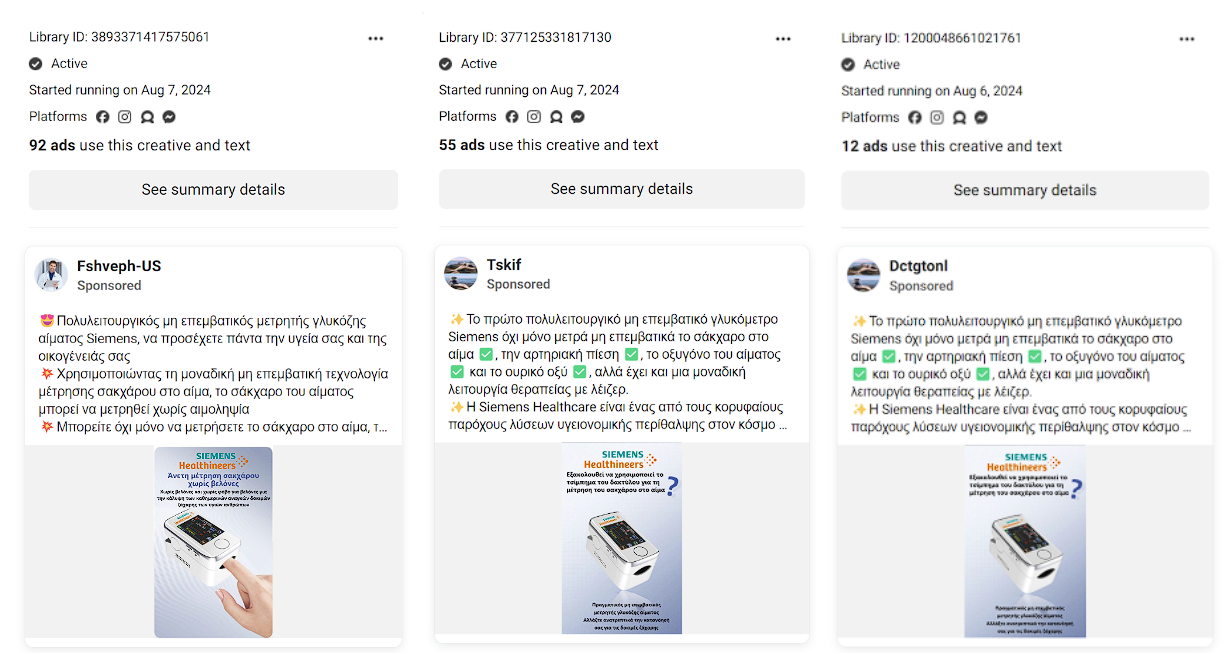

Less frequent but potentially more dangerous are health and pseudoscience scams. An example uncovered by FR in August 2024 concerned an alleged “non-invasive blood glucose meter by Siemens Healthineers”. FR found that the ad falsely impersonated said company, and that no such working product existed on the market.

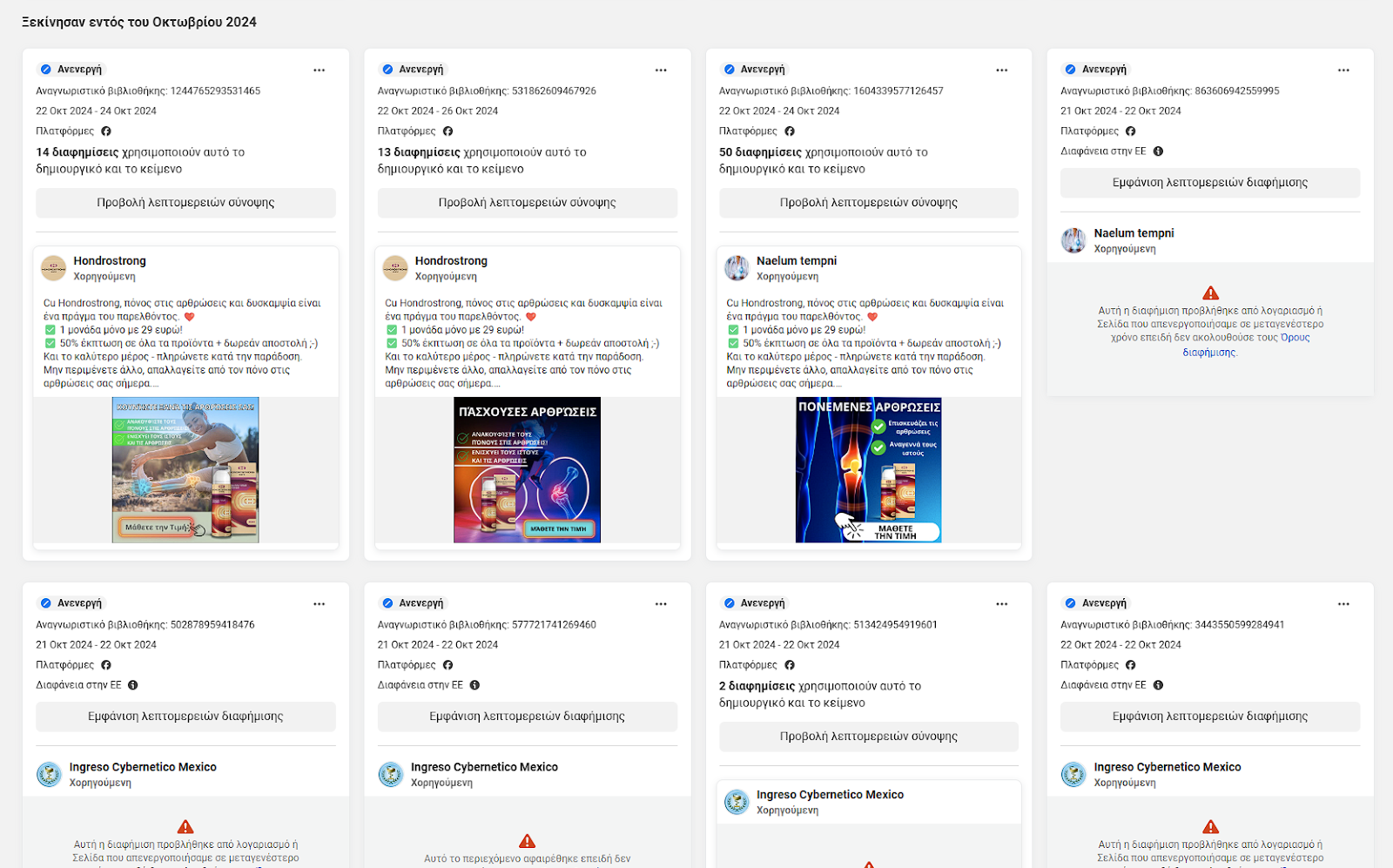

Another health fraud uncovered by FR in February 2024, concerned a product named “Hondrostrong”, allegedly “a certified treatment for rheumatic diseases”. FR found that the corresponding ads impersonated celebrities and doctors, and the product was not medically recognized. The Hellenic Society for Rheumatology and National Medicines Agency (EOF) were notified and published statements alerting the public and condemning the scam.

Upon reviewing the Meta Ad Library again for this report, we searched for the term “Hondrostrong” on 30 January 2025. We discovered that while many such ads had been deleted by Meta as policy-infringing, several campaigns with the scam had run in October 2024 without facing any obstacles. Meta seems to have only disabled certain identical ads without establishing human review for the rest of the ads using the scam’s identifying name.

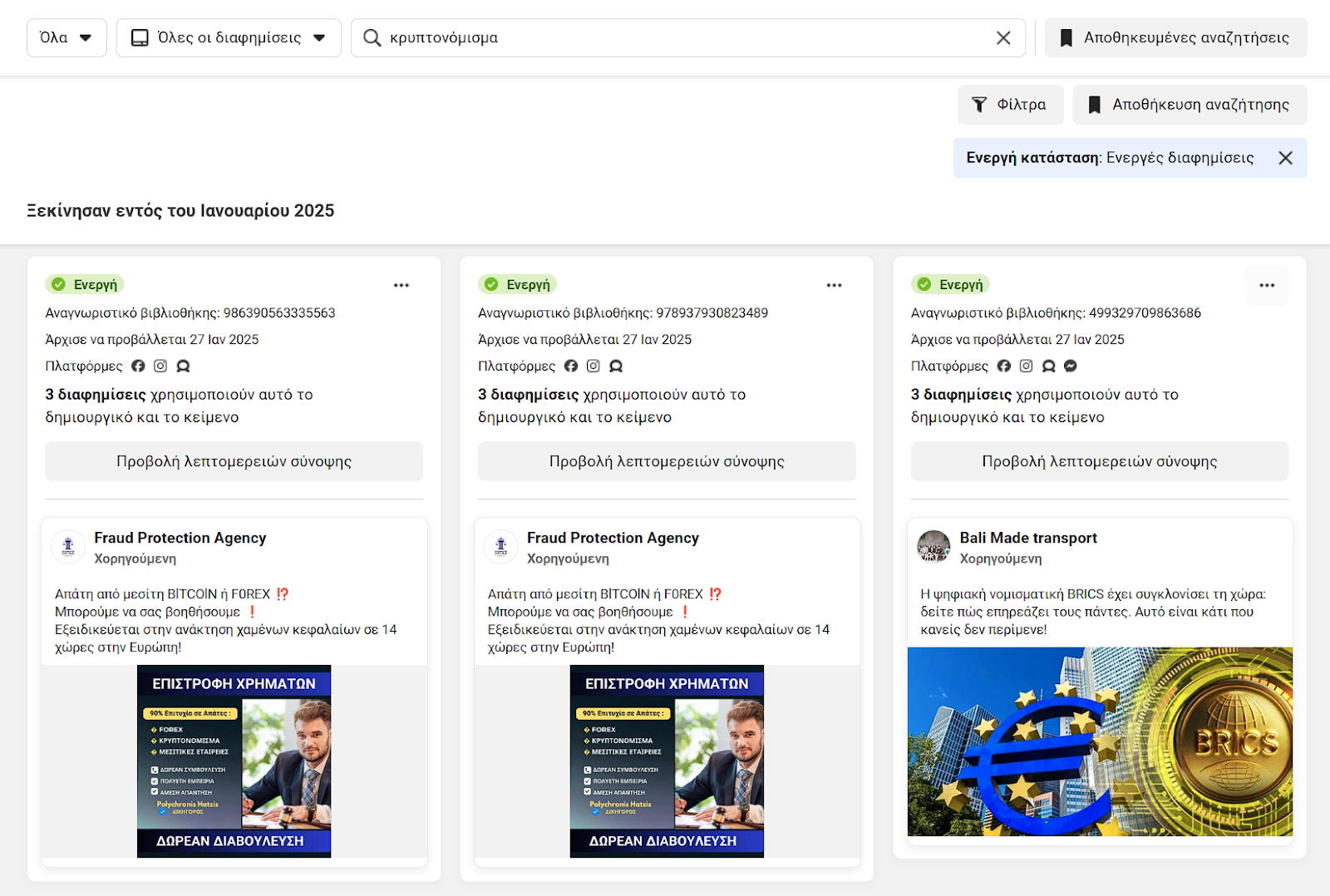

Next, for this report’s purposes, on 30 January 2025, we searched the Ad Library for one of the common themes of scam ads: cryptocurrency. In the third position, we found another such scam.

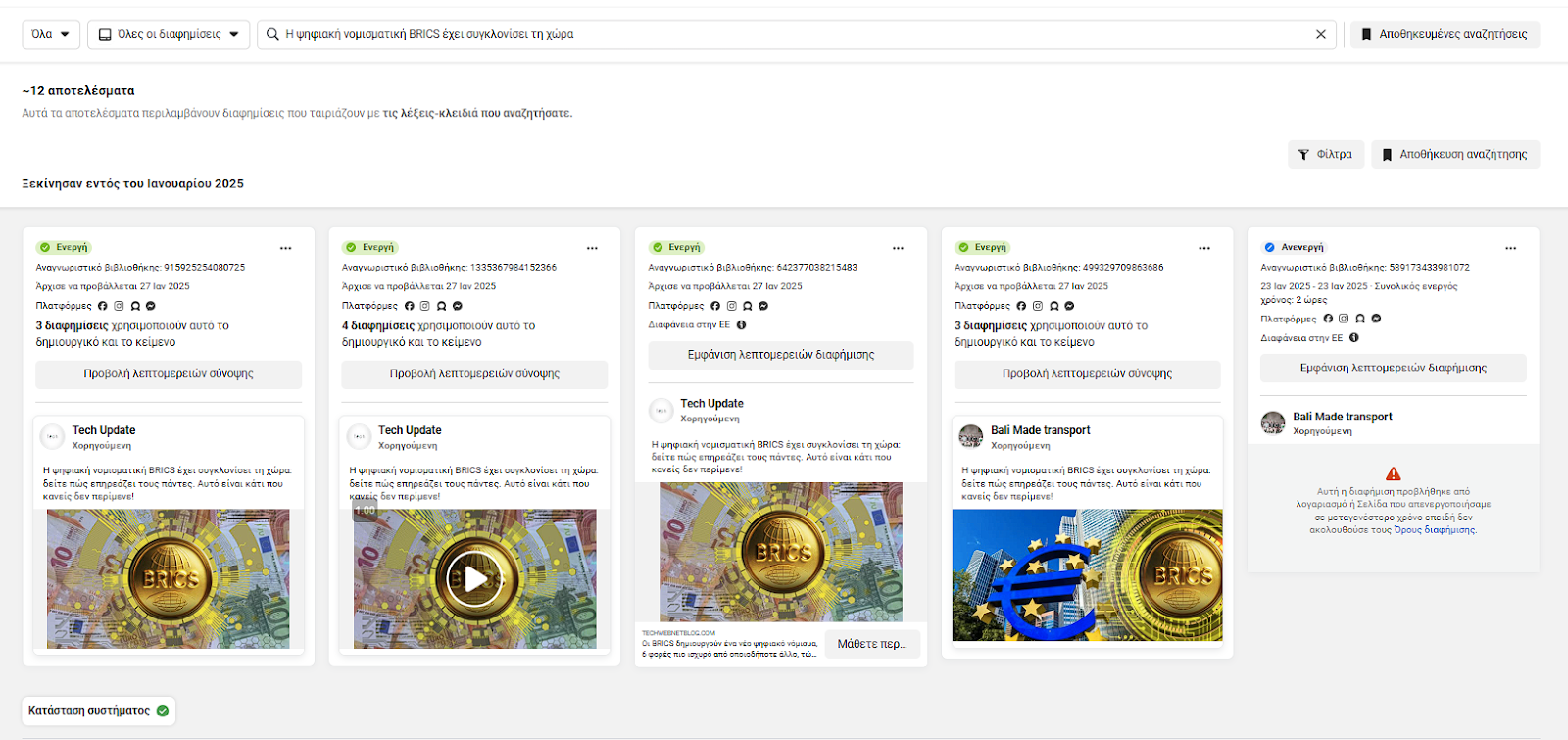

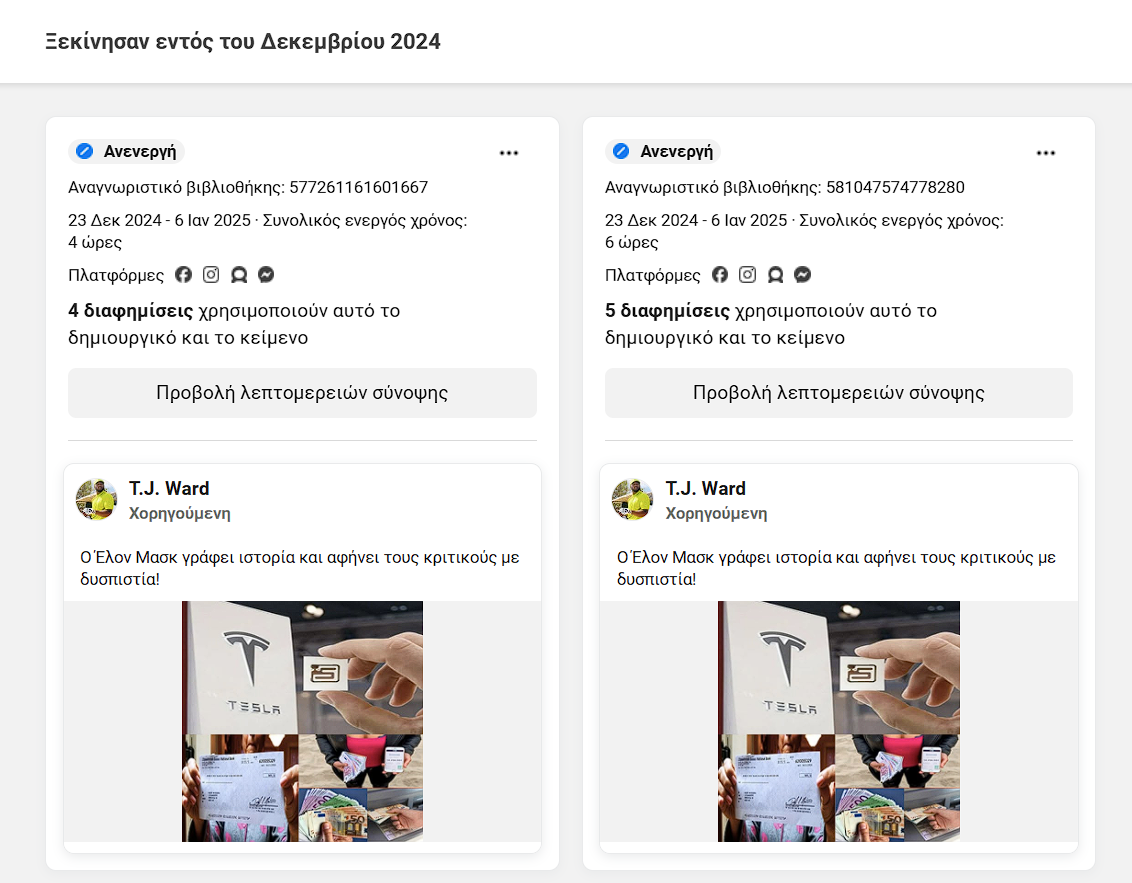

We searched again for its distinctive phrasing and instantly found multiple identical campaigns. One specific instance had been deactivated by Meta, but this did not trigger any manual review of the remaining ads with this phrasing, or other ads by the same ad account.

Lastly, for this report, an independent user with residence in both Greece and Cyprus offered to record all ad scams they encountered over 1 month on their personal Facebook profile during everyday use. We attach their 36 screenshots, with their comment that some ads were shown to them multiple additional times, and provide the Ad library identification for one of the examples they recorded.

Short suggestions

Meta should be asked to:

1. Provide an extensive technical report on how it applies moderation to advertisements, including the specifications of the automated systems it uses and in-depth technical and flagging metrics.

2. Significantly increase the number of human moderators, especially in underrepresented languages, and involve them much more extensively in ad evaluation.

3. Require manual ad approval in campaigns with suspect themes, domains, or records. These criteria should be independently verifiable and capable of being flagged for omissions.

Συντακτική ομάδα