- Executive summary

- 1. Introduction and methodology

- 2. Analysis of VLOPs and VLOSEs

- 2.1 Key indicators and statistics

- 2.2 Illustrative cases of significant notices

- 2.2.1 Short descriptions of selected cases

- Case 1: Phishing scam to harvest personal and financial data using malicious impersonation of well-known retail brands (Decathlon / Public / Plaisio)

- Case 2: Scam promoting non-authorized “treatment” products for various forms of diabetes (e.g., “Insuvit,” “Gluconax”), using misleading medical claims and misappropriation of symbols/credentials

- Case 3: Scam involving a purported medical device, “Omron Smartwatch for health,” claiming non-invasive blood sugar/ECG measurement, using suspicious domains and aggressive sales tactics

- 2.2.2 Brief explanation of their significance

- 2.2.1 Short descriptions of selected cases

- 2.3 Trends and analysis of reported topics

- 3. Independence and transparency

- 4. FactReview activity beyond submitting notices to online platforms

- 5. Challenges and recommendations

Executive summary

FactReview has been awarded the status of a “Trusted Flagger” for illegal content in Greece by the National Commission for Telecommunications and Posts (EETT), for a three-year term. Under Article 22 of the DSA, trusted flaggers are required to publish (at least annually) “easy-to-understand and sufficiently detailed” reports on the notices they submit (via the Article 16 mechanisms), categorizing, at minimum, (a) the identity of the hosting service provider, (b) the type of allegedly illegal content, and (c) the action taken by the provider. In addition, the report must explain the processes that ensure the trusted flagger’s continued independence.

This reporting period covers the calendar year 2025. The previous report, covering January 2, 2024 to May 31, 2025, is available here.

As a trusted flagger, FactReview submits requests for review of potentially illegal content to VLOPs, which are required to handle such requests with increased priority. In each submission, FactReview ensures that it includes detailed documentation and legal reasoning. The 2025 activity profile shows a clear pattern of high-volume, systematically replicated misleading advertisements of a medical/health nature, often combining: (i) implausible medical claims (e.g., “non-invasive” glucose measurement and broader promises of diagnosis), (ii) product compliance/safety issues and unlawful advertising of purported medical devices, (iii) consumer-protection violations and lack of trader identity, and (iv) in some cases, abusive use of trademarks/brand identities of recognizable companies.

Below are brief statistics on the notices submitted by FactReview to online platforms in 2025:

- Total notices: 49

- Topic area: medical and financial scams

- Platform: Facebook

- Acceptance rate: 100%

- Average time to decision: 2.65 days

During 2025, after identifying fundamental problems in the way multiple platforms operate, FactReview emphasized systemic approaches. By way of example, in March 2025 it was invited to the European Parliament to present a report on Meta’s (Facebook/Instagram) fundamentally problematic automated assessment systems.

It also conducted research and development on a technical system that, once mature, could enable the identification of illegal material and the submission of notices at orders of magnitude greater scale, under human supervision.

1. Introduction and methodology

1.1 Purpose of the report

The purpose of this report is to provide the Digital Services Coordinator (the National Commission for Telecommunications and Posts) and other competent bodies with a comprehensive record of FactReview’s activities, for 2025, as an officially designated Trusted Flagger under the Digital Services Act (DSA) in Greece. The report documents the nature, volume, and outcomes of all items of content that were flagged as potentially illegal on Very Large Online Platforms, with an emphasis on transparency, accountability, and the protection of consumers and the public from fraudulent and misleading practices online. This report aims to fulfill our obligations under Article 22 of the DSA by providing an evidence-based overview of our work, facilitating effective oversight, and supporting continuous improvement in cooperation with online platforms.

1.2 Brief description of the methodology

FactReview applies a multi-layer methodology to ensure the accuracy, relevance, and legal substantiation of each Trusted Flagger notice:

- Content identification: Cases are selected for review through continuous monitoring of material across all major online platforms, for example, the Facebook Ad Library, as well as user submissions.

- Preliminary assessment: Each case is initially assessed to determine whether it contains potentially illegal content, including fraud, deception, or other infringements.

- Evidence collection: The team collects and archives all relevant evidence, such as URLs, screenshots, and, where required, video. Secure sandboxed environments are used to investigate suspicious links safely and to document both the process and user-facing risks.

- Legal analysis: The legal and regulatory framework applicable to each case is analyzed, with references to Greek criminal, commercial, and consumer law, as well as relevant EU directives, so that each notice rests on a clear legal basis.

- Notice submission: For each case, a detailed notice is drafted and submitted via the Trusted Flagger channel of the relevant VLOP, attaching supporting documentation and citing the relevant provisions.

- Follow-up monitoring: The status and outcomes of all notices are tracked systematically, including response times and platform decisions, to evaluate performance and ensure transparency.

2. Analysis of VLOPs and VLOSEs

2.1 Key indicators and statistics

2.1.1 Trusted Flagger activity data table for 2025

Categories of illegal content:

- Κ22: Trademark infringement

- Κ46: Risk for public health

- Κ51: Inauthentic user reviews

- Κ52: Impersonation or account hijacking

- Κ53: Phishing

- Κ65: Insufficient information on traders

- Κ66: Illegal offer of regulated goods and services (eg. health)

- Κ67: Sale of non-compliant or counterfeit products (eg. dangerous toys)

| Report no. | Full submission report | Platform | Illegal content category | Submission date | Decision notification date | Decision time (days) | Platform action |

|---|---|---|---|---|---|---|---|

| 1 | Full report | K53, K52, K22, K51 | 2025-04-29 | 2025-04-29 | 0 | The notice was accepted and the content was removed | |

| 2 | Full report | K46, K66, K52, K51 | 2025-04-29 | 2025-05-01 | 2 | The notice was accepted and the content was removed | |

| 3 | Full report | K53, K52, K22, K51 | 2025-05-07 | 2025-05-09 | 2 | The notice was accepted and the content was removed | |

| 4 | Full report | K53, K52, K22, K51 | 2025-05-07 | 2025-05-09 | 2 | The notice was accepted and the content was removed | |

| 5 | Full report | K46, K66, K51, K52, K65 | 2025-05-10 | 2025-05-14 | 4 | The notice was accepted and the content was removed | |

| 6 | Full report | K46, K66, K67, K22, K65 | 2025-05-28 | 2025-05-30 | 2 | The notice was accepted and the content was removed | |

| 7 | Full report | K46, K66, K67, K22, K65, K51 | 2025-05-28 | 2025-06-04 | 7 | The notice was accepted and the content was removed | |

| 8 | Full report | K46, K66, K67, K22, K65, K51 | 2025-05-28 | 2025-05-30 | 2 | The notice was accepted and the content was removed | |

| 9 | Full report | K46, K66, K67, K22, K65 | 2025-05-28 | 2025-05-30 | 2 | The notice was accepted and the content was removed | |

| 10 | Full report | K46, K66, K51, K65 | 2025-05-28 | 2025-05-30 | 2 | The notice was accepted and the content was removed | |

| 11 | Full report | K46, K66, K51, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 12 | Full report | K46, K66, K51, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 13 | Full report | K46, K66, K51, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 14 | Full report | K46, K66, K51, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 15 | Full report | K46, K66, K51, K52, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 16 | Full report | K46, K66, K51, K52, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 17 | Full report | K46, K66, K51, K52, K65 | 2025-06-29 | 2025-06-30 | 1 | The notice was accepted and the content was removed | |

| 18 | Full report | K53, K52, K22, K51 | 2025-10-04 | 2025-10-06 | 2 | The notice was accepted and the content was removed | |

| 19 | Full report | K46, K66, K51, K65 | 2025-10-04 | 2025-10-06 | 2 | The notice was accepted and the content was removed | |

| 20 | Full report | K46, K66, K65 | 2025-11-20 | 2025-11-24 | 4 | ΗThe notice was accepted and the content was removed | |

| 21 | Full report | K46, K66, K65 | 2025-11-20 | 2025-11-26 | 6 | The notice was accepted and the content was removed | |

| 22 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-24 | 2025-11-28 | 4 | The notice was accepted and the content was removed | |

| 23 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-24 | 2025-11-28 | 4 | The notice was accepted and the content was removed | |

| 24 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-25 | 2025-11-28 | 3 | The notice was accepted and the content was removed | |

| 25 | Full report | K46, K66, K67, K22, K65 | 2025-11-25 | 2025-11-28 | 3 | The notice was accepted and the content was removed | |

| 26 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-25 | 2025-11-28 | 3 | The notice was accepted and the content was removed | |

| 27 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-25 | 2025-12-04 | 9 | The notice was accepted and the content was removed | |

| 28 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-27 | 2025-12-02 | 5 | The notice was accepted and the content was removed | |

| 29 | Full report | K46, K66, K67, K22, K65, K51 | 2025-11-27 | 2025-12-04 | 7 | The notice was accepted and the content was removed | |

| 30 | Full report | K46, K66, K67, K22, K65 | 2025-11-27 | 2025-12-04 | 7 | The notice was accepted and the content was removed | |

| 31 | Full report | K46, K66, K67, K22, K65 | 2025-11-27 | 2025-12-04 | 7 | The notice was accepted and the content was removed | |

| 32 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-22 | 2025-12-24 | 2 | The notice was accepted and the content was removed | |

| 33 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-22 | 2025-12-24 | 2 | The notice was accepted and the content was removed | |

| 34 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-22 | 2025-12-24 | 2 | The notice was accepted and the content was removed | |

| 35 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-24 | 1 | The notice was accepted and the content was removed | |

| 36 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-24 | 1 | The notice was accepted and the content was removed | |

| 37 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-24 | 1 | The notice was accepted and the content was removed | |

| 38 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-24 | 1 | The notice was accepted and the content was removed | |

| 39 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-25 | 2 | The notice was accepted and the content was removed | |

| 40 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-25 | 2 | The notice was accepted and the content was removed | |

| 41 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-25 | 2 | The notice was accepted and the content was removed | |

| 42 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-23 | 2025-12-25 | 2 | The notice was accepted and the content was removed | |

| 43 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed | |

| 44 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed | |

| 45 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed | |

| 46 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed | |

| 47 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed | |

| 48 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed | |

| 49 | Full report | K46, K66, K67, K22, K65, K51 | 2025-12-29 | 2025-12-31 | 2 | The notice was accepted and the content was removed |

2.2 Illustrative cases of significant notices

2.2.1 Short descriptions of selected cases

Case 1: Phishing scam to harvest personal and financial data using malicious impersonation of well-known retail brands (Decathlon / Public / Plaisio)

Summary:

Sponsored ads on Facebook falsely presented a supposed “former employee” of a well-known retail company who allegedly “takes revenge” for poor working conditions by offering a “secret discount” on branded products at unrealistically low prices. Users were redirected to fake websites unrelated to the real brands, where they were prompted to enter sensitive payment details (e.g., card details) to complete the “purchase.” The scam was systematically accompanied by fake reviews and fabricated comments to increase credibility; in several cases there were indications of subscription traps/hidden charges via terms of use that the user accepted unknowingly. Beyond exposing users to the risk of personal-data compromise, the scam created an immediate risk of financial exploitation.

Case 2: Scam promoting non-authorized “treatment” products for various forms of diabetes (e.g., “Insuvit,” “Gluconax”), using misleading medical claims and misappropriation of symbols/credentials

Summary:

Facebook ads promoted non-authorized products as a “cure” or “permanent solution” for diabetes (primarily type 2), using claims not scientifically substantiated and presenting the products as supposedly approved by Greek authorities and/or by making misleading references to public health bodies. In individual cases, misuse of state symbols was identified, along with deceptive invocation of “experts” or purported scientific credentials, and extensive use of fabricated testimonials/ratings to create false credibility. The websites linked to the advertisements collected personal information and/or payment data for products of questionable origin, targeting vulnerable users with chronic illnesses and creating risks of both financial exploitation and public-health harm (due to potential delay/abandonment of proper treatment).

Case 3: Scam involving a purported medical device, “Omron Smartwatch for health,” claiming non-invasive blood sugar/ECG measurement, using suspicious domains and aggressive sales tactics

Summary:

Sponsored Facebook ads directed users to external websites promoting an alleged “Omron Smartwatch for health,” attributing it to a well-known and trusted medical-device company. The material claimed the device performs non-invasive blood sugar measurement, tracks uric acid, records ECG, and provides other “medical” functions alongside smartwatch features, at an exceptionally low price and with time-limited pressure offers. The websites were hosted on unusual and untrustworthy domains (for example, TLDs such as “.monster”), with no verifiable connection to the company’s official channels; they lacked clear certification/regulatory compliance information (e.g., markings/documentation that would be expected for a medical device) and showed signs of a superficial/inconsistent returns policy. Taken together, brand impersonation, unrealistic medical claims, lack of substantiation, and aggressive sales tactics, these features strongly indicated organized deception, with risk of financial loss and potential health risk for users.

2.2.2 Brief explanation of their significance

Analysis of the cases covered by this report reveals recurring patterns:

- Brand impersonation: Systematic use of large brand identities (and, in some cases, references to public-health bodies/symbols) to create a veneer of legitimacy and credibility for fraudulent campaigns.

- Harvesting financial data and redirecting to external domains: Many scams redirect users off-platform to fake websites where sensitive payment data and/or personal data are requested.

- Fabricated testimonials and ratings: Extensive use of fake reviews, comments, and “testimonials” (sometimes showing signs of reused templates) to create false social proof and pressure users into purchases.

- False scarcity and urgency nudges: Frequent use of countdown timers, “limited availability,” aggressive calls to action, and techniques that reduce the user’s time for critical thinking.

- Misleading medical claims and public-health risk: A substantial portion of cases rely on “cure” or “diagnosis” claims (especially for diabetes) and promote products of questionable origin/compliance, targeting vulnerable users.

- Reuse of scam templates and scaling: Structural similarities across cases indicate reuse of “scam templates” and likely operation of networks that transfer/adapt campaigns to new brands or “products” (e.g., shifting from “brand-discount phishing” to “medical device / smartwatch” scams).

2.3 Trends and analysis of reported topics

The most dominant pattern we identified in 2025 is a large-scale cluster of misleading advertisements of a medical/health nature, especially content promoting “non-invasive” glucose-measurement devices or multifunction “diagnostic” devices, paired with broad and highly inflated promises of detection/diagnosis.

This pattern combined repeated, mutually reinforcing indicators of illegality, such as:

- False or misleading medical claims, including claims of extremely high accuracy and seemingly impressive but unsubstantiated detection/treatment capabilities, increasing public-health risk when targeting vulnerable groups (e.g., people with diabetes).

- Consumer-rights violations and trader-transparency deficiencies, including repeated absence of trader identity, vague terms, and non-compliant distance-selling information.

- Signs of networked/repeated campaign behavior, such as recurring advertiser-account IDs across multiple cases and systematic replication of the same scam pattern across many ads.

3. Independence and transparency

3.1 Impartiality safeguards

FactReview applies strict policies and structural safeguards to maintain impartiality and editorial independence across all Trusted Flagger activities:

- Editorial independence and transparent procedures: All flagging decisions are taken by the editorial team after reviewing all available evidence, with review by two or more people both for the underlying cases and for the supporting documentation. There is no influence from external partners, advertisers, platforms, or public authorities.

- Conflict-of-interest policy: Staff and collaborators are required to declare potential conflicts (financial, familial, political) and to recuse themselves from cases where such conflicts could affect judgment.

- External oversight: FactReview is a member of the International Fact-Checking Network (IFCN), the European Fact-Checking Standards Network (EFCSN), and the European Digital Media Observatory (EDMO). This implies regular independent audits of editorial processes and compliance with international standards of accuracy, impartiality, and transparency.

- Public transparency: FactReview’s operating rules, conflict-of-interest policies, and annual evaluations are published on the organization’s website. All notices we submit as a trusted flagger are based exclusively on objective legal and factual assessments, and are also publicly published on our site.

3.2 Legal entity and funding

There were no changes in FactReview’s legal entity form in 2025, or in its ownership structure. The organization operates under DIGITAT ΔΙΑΔΙΚΤΥΑΚΗ ΕΝΗΜΕΡΩΣΗ O.E. with Greek tax identification number (ΑΦΜ) 801973786.

FactReview’s 2025 income streams related to grants managed by the EFCSN and IFCN networks.

EFCSN funding related to a public “prebunking” program against disinformation (“Prebunking at Scale”). That program was funded by Google.org, Google’s philanthropic arm.

IFCN funding related to support for new network organizations (“BUILD”) and was used to support FactReview’s explanatory journalism and project management. That funding was provided by Google and YouTube.

In both cases, FactReview’s independence was ensured because program management and the decision on allocating funds among network organizations rested with the networks themselves and their own integrity-protecting criteria. The programs were drafted on the basis of full operational independence by the network participants, with only minimal requests to comply with Google policies (for example, regarding privacy terms).

All relevant funding sources are publicly accessible on FactReview’s official website. The corresponding financial report for 2025 will be available soon in the relevant section.

4. FactReview activity beyond submitting notices to online platforms

4.1 Presentation of a special report to the IMCO Committee

In March 2025, FactReview participated, by invitation, in a meeting of the European Parliament’s Committee on the Internal Market and Consumer Protection (IMCO). FactReview was the only Trusted Flagger representative from across Europe present to present its report on problems identified in the context of implementing the Digital Services Act.

During its IMCO intervention, FactReview presented the first special report produced in its capacity as a trusted flagger, choosing to focus on Meta due to: (a) its status as a VLOP, (b) the especially widespread use of its services in the EU, and (c) the systematic exposure of users to advertising fraud and deception. The report argued that, notwithstanding the company’s transparency statements, critical aspects of how advertising oversight and enforcement are implemented remain unclear and difficult to verify, particularly because the process relies heavily on automated systems without sufficient, auditable data to show consistent and effective enforcement.

It also highlighted limited human oversight, especially for less represented languages and markets, citing the imbalance of users per content moderator and especially low staffing levels in countries such as Greece, an issue directly tied to the practical ability to fulfill DSA obligations for effective notice handling and systemic-risk mitigation.

In this context, the presentation linked the report’s findings to EU-level processes concerning Meta’s compliance with the DSA, emphasizing that the problems are systemic rather than isolated. Specifically, the issues range from deficiencies in labeling and detecting political ads (allowing avoidance of heightened transparency requirements during election periods), to the continued activity of organized disinformation networks, and the wide dissemination of financial scams through advertisements. The report also pointed to the limited effectiveness of user reports for obvious scams and the absence of a clear, functional escalation mechanism to human review, as well as instances of asymmetric enforcement (where content exposing suspicious networks or scams is restricted or removed without any practical avenue for appeal). The report concluded with concern about an “apparent conflict of interest,” given that the business model relies almost exclusively on advertising revenue, and it proposed concrete policy and oversight measures.

Indicative recommendations included: requiring extensive, technically substantiated and auditable information on how ad oversight is implemented; materially increasing human resources (especially for underrepresented languages); and establishing mandatory manual review/approval for campaigns with suspicious characteristics, using criteria that can be independently audited.

The full special report is available here.

A video of the IMCO presentation is also available here.

4.2 Presentation of the Trusted Flagging program at GlobalFact12

As part of the annual international fact-checking conference GlobalFact, organized by the IFCN, FactReview used the opportunity to present the Trusted Flagger program in a concise and practical way. The conference for 2025 reportedly took place in Rio de Janeiro, Brazil in June 2025.

Based on our experience to date, the fact-checking community still lacks sufficient awareness of the program, both in terms of what it is and how it works, and in terms of its effects and safeguards. This also concerns the tools available in the EU for rapid response to potentially harmful illegal content.

In the presentation, we explained the relationship between the DSA and the Trusted Flagging mechanism and described the core implementation process. We highlighted the point of contact between fact-checking and flagging illegal content, without conflating the two roles, while also addressing common misunderstandings, particularly concerns about “censorship.” We emphasized that the trusted flagger role concerns documented notices in unambiguous cases with a clear and applicable legal framework. The final decision remains with platforms and is subject to transparency and accountability obligations.

Finally, we underlined that Trusted Flagging operates as a complement to fact-checking, not as a competitor. It is an “emergency” tool when immediate harm reduction is required. Because best practices are still emerging, awareness and active participation by sector organizations are critical.

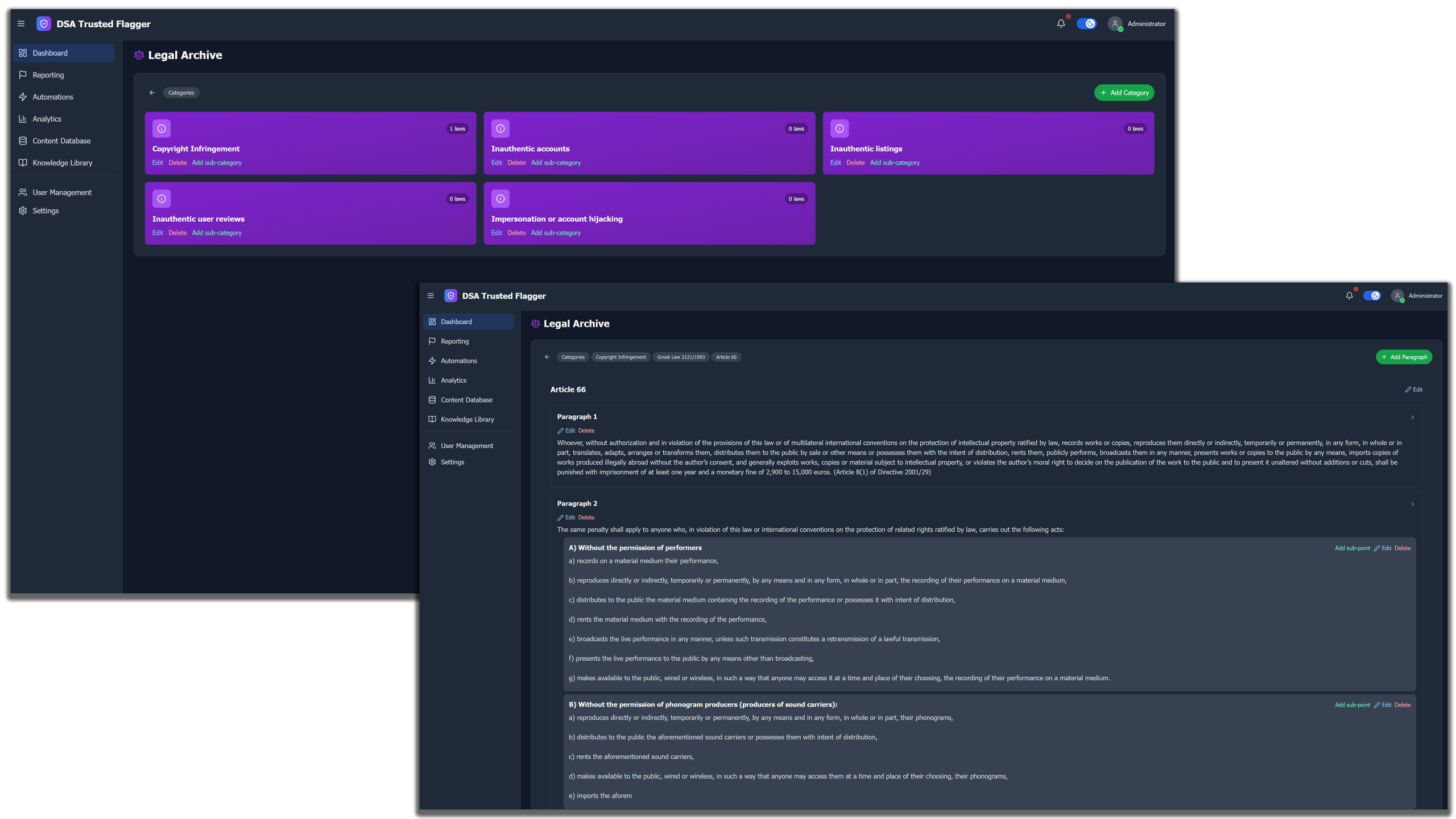

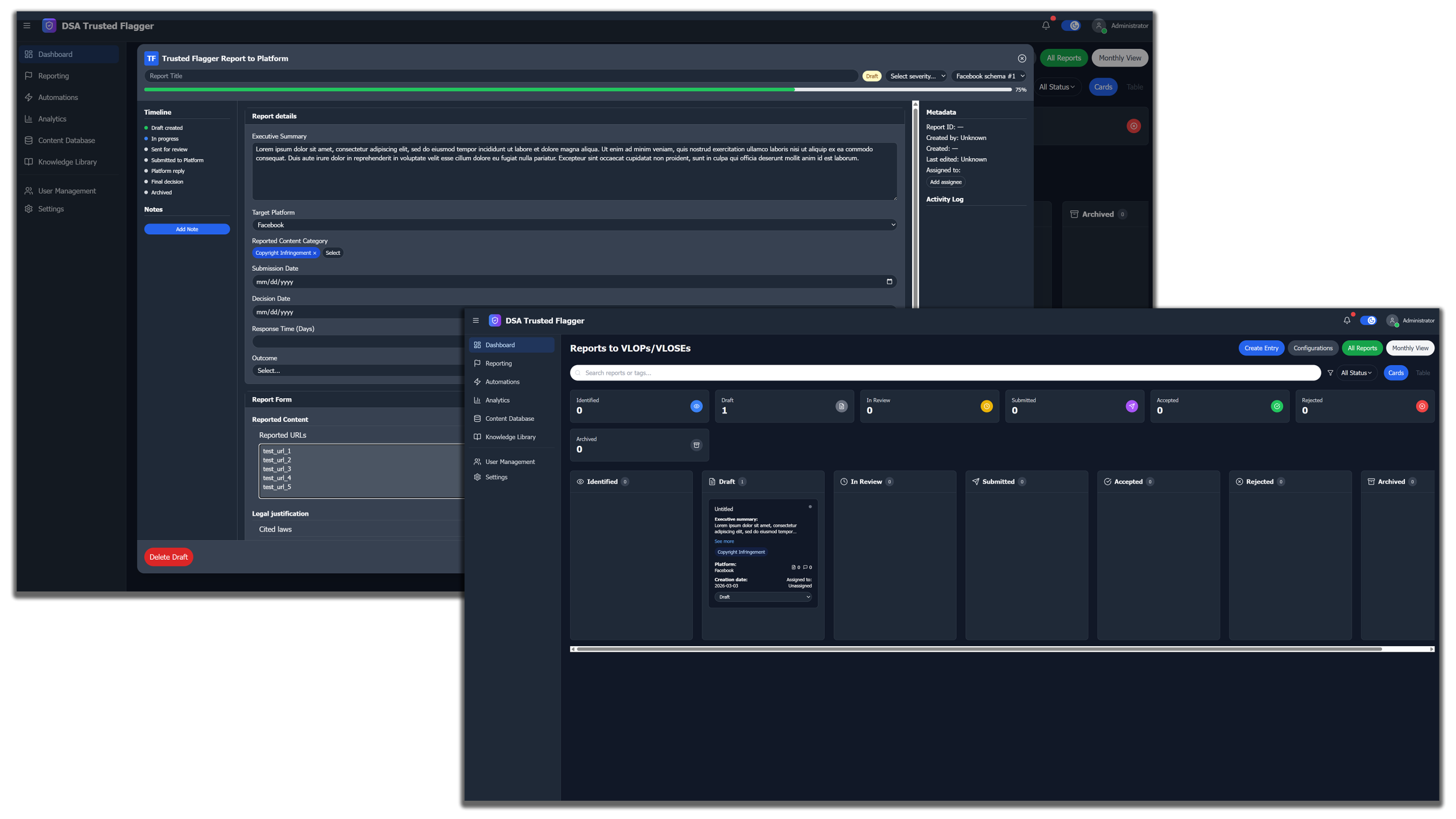

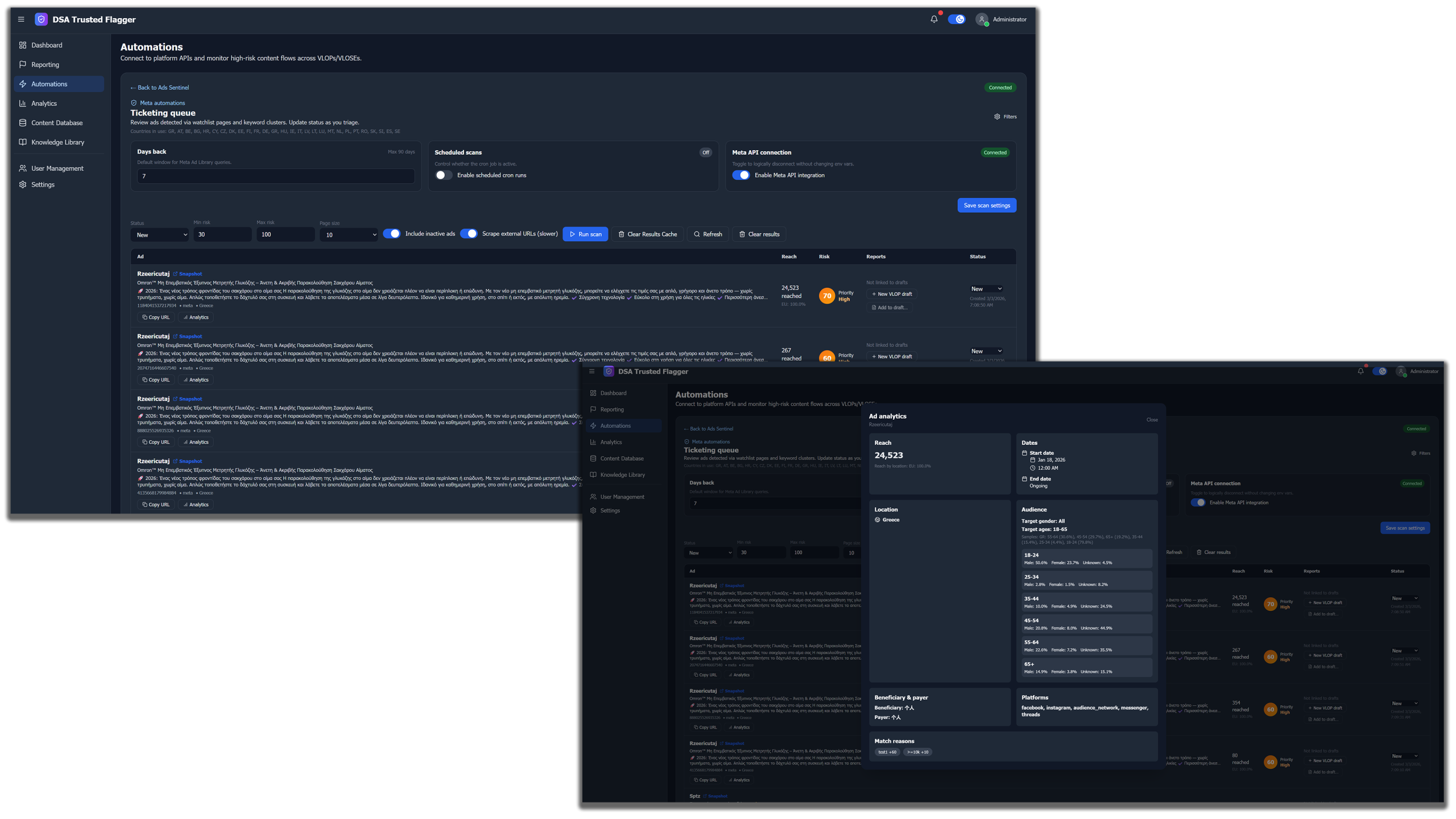

4.3 Development of a platform to standardize and automate Trusted Flagging workflows

Given the volume of material that organizations participating in Trusted Flagging are required to analyze, and given the limited resources available for this work, FactReview, taking into account our activity in developing targeted technology tools, proceeded to develop a dedicated platform; development continues into 2026.

This platform will allow Trusted Flagger organizations to organize their work more effectively and automate repeated steps in the process, making identification, substantiation, and preparation of notices faster and more efficient.

Specifically, the platform, still in alpha and being tested systematically before being offered to other trusted flaggers, includes a module that stores legal code in a dedicated digital format. That format supports both: (a) easy use of the appropriate legislation per notice, and (b) analysis of the full legal repository by an AI system, with the aim of providing immediate suggestions mapped to the specific potentially illegal material under review by a trusted flagger.

In addition, the platform will provide the ability to create report templates for each online platform, and to archive cases in a format that supports later analysis. This facilitates providing information both to the relevant Digital Services Coordinator and to EU bodies, if requested.

Finally, the platform enables integration with advertising libraries (or similar tools) offered by online platforms (such as Meta’s Ad Library) and provides more detailed, organization-specific ad search, based on categories and keywords defined by each organization.

It also supports automated periodic searches and creation of a recommendation list of potentially illegal ads for review by the organization and possible inclusion in a subsequent notice. In this way, the time required for discovery and notice preparation is significantly reduced, while full human control over the process and confirmation of the data included in each submission is maintained.

In parallel, FactReview is conducting research on automated identification of scam advertisements on social networks. Our preliminary data indicate that, in many categories, identification is feasible with very high accuracy and low cost, enabling large-scale deployment.

Below, we present, at a high level, the flow for identifying scam ads that impersonate well-known brands in ads on Pinterest:

Similar practices are studied and developed carefully, and will be used only for collecting suspicious material that is then reviewed thoroughly by a human expert.

5. Challenges and recommendations

5.1 Obstacles and issues identified

The core of our 2025 experience was that the “legal obligation to prioritize” trusted flagger notices does not automatically translate into operational effectiveness. Without common, implementable directions, without technical interoperability, and without adequate resources, day-to-day work tends to become a high-cost manual process: identifying, documenting, legally substantiating, submitting, and tracking the progress of notices.

Both FactReview and many other trusted flagger organizations have identified a lack of clear operational guidance at EU level regarding “common” processes and the interpretation/mapping of what constitutes illegal content, especially in “borderline” cases that intersect criminal law, consumer protection, public health, and advertising rules. At the same time, the lack of technical tools and unified platform integrations, also highlighted in our first official report, remains unresolved.

FactReview continues to encounter obstacles due to the low accessibility and transparency of ad-search tools on certain platforms (for example, limitations by X to account-level search and basic data extraction, without a searchable per-ad database or keyword-based search). This undermines proactive detection and escalation of potentially illegal ads, particularly when illegality is associated with patterns or networks.

Another obstacle is legal uncertainty in content that is illegal “by country” rather than uniformly across the EU. What constitutes illegal content is defined by national/EU legal frameworks; when illegal content is detected, it is removed only in the territory where it was identified. This creates genuine difficulties for escalation and documentation when a case involves cross-border targeting, multilingual creatives, or “hybrid” scam formats.

A particularly significant inhibitory factor is the lack of funding and relevant tooling for trusted flagger organizations. The European Commission itself recognizes (in its 2025 answer to the European Parliament) that trusted flagger activities “require adequate support,” without, however, setting out clear intent or instruments for providing such support.

5.2 Process improvements and new focus areas

5.2.1 For platforms

Online platforms should undertake substantive improvements to reporting tools so they support efficient, well-substantiated submissions (e.g., the ability to export reports and data; structured documentation fields). At the same time, they should remove practices that make reporting difficult or discouraging (unnecessary steps; limits on the number of URLs that can be submitted in one report for the same scam case), because these undermine the speed and traceability required by the DSA framework. In addition, meaningful upgrades are needed for advertising repositories and search/transparency mechanisms so they permit detection of scam patterns and illegal campaigns, not just isolated ads, thereby strengthening proactive detection and effective response to systemic phenomena.

5.2.2 For regulators (the National Digital Services Coordinator and peer authorities)

We recommend integrating more bodies into the program, with a focus on entities that can serve as substantive resources for effective Trusted Flagging implementation. Specifically, direct cooperation with bodies such as the National Organization for Medicines (EOF), an idea already discussed with Greece’s Digital Services Coordinator, as well as with civil-society organizations, would be a significant step toward strengthening and supporting the work of active trusted flaggers.

5.2.3 For EU institutions

For Trusted Flagging to operate effectively and consistently across Europe, it is crucial for EU institutions to issue clear, practically applicable guidelines for trusted flaggers, with minimum common operational standards (templates/forms; required fields; realistic timelines; feedback and traceability rules). In parallel, a dedicated section is needed on “borderline content” and cross-border enforcement issues, to reduce divergence in practice. Finally, we propose development of a shared infrastructure/toolkit for comparative effectiveness evaluation, with comparable performance indicators by illegality category and language, so that problems are identified early and addressed before becoming systemic.

Συντακτική ομάδα